Most AI assistants fail silently.

They don’t crash.

They don’t throw errors.

They just slowly become less accurate, less helpful — and more expensive.

In production, the biggest risk for AI agents isn’t the model.

It’s the lack of observability.

This article explains what AI observability really means, why traditional analytics aren’t enough, and how Monobot approaches monitoring, QA, and continuous improvement for voice and chat agents.

Why AI Agents Degrade Over Time

Unlike traditional software, AI agents don’t stay static.

Over time:

- user behavior changes

- new edge cases appear

- policies and pricing evolve

- knowledge becomes outdated

- traffic volume increases

- integrations change

Without visibility, small issues compound.

By the time a team notices:

- containment rate is down

- escalations are up

- customers are frustrated

…damage is already done.

Why Traditional Analytics Don’t Work for AI

Standard metrics like:

- number of messages

- call duration

- average response time

tell you what happened, but not why.

AI systems need a different level of insight.

You need to understand:

- which intents fail

- where knowledge retrieval breaks

- when the agent guessed instead of knowing

- why handoff was triggered

- which answers cause confusion

This is where AI observability starts.

What “Observability” Means for AI Agents

For AI voice and chat agents, observability answers five questions:

- Did the agent resolve the request?

- If not — why?

- Was the knowledge missing, unclear, or wrong?

- Was escalation necessary or avoidable?

- What should be improved next?

Observability is not just dashboards.

It’s structured insight into agent behavior.

Key Signals to Monitor in Production AI

1. Containment vs Escalation

- How many conversations are fully resolved by AI?

- What percentage is escalated to humans?

- Which intents escalate most often?

High escalation isn’t always bad — but unexplained escalation is.

2. Knowledge Base Coverage

Track:

- unanswered questions

- fallback responses

- “I’m not sure” cases

- repeated clarifications

These signals show exactly where the KB needs work.

3. Intent Drift

Over time, users ask the same thing differently.

Monitoring intent distribution helps you spot:

- new phrasing patterns

- emerging topics

- outdated intent mappings

Without this, intent accuracy slowly decays.

4. Voice-Specific Failures

For voice agents, you also need to track:

- ASR misrecognitions

- interruptions

- long pauses

- repeated confirmations

Voice failures feel much worse to users than chat failures.

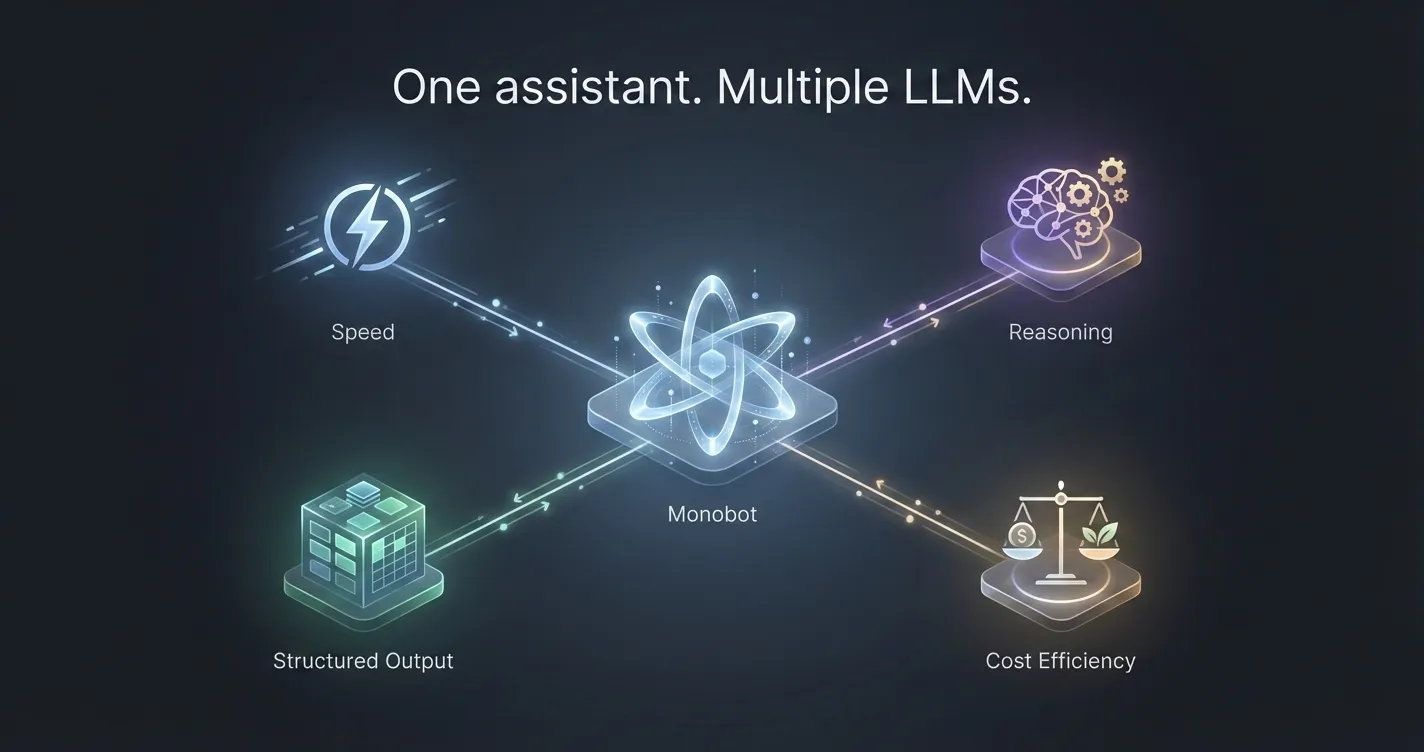

5. Cost vs Value Signals

AI that works but costs too much is also a failure.

You need visibility into:

- model usage per intent

- expensive flows vs simple ones

- opportunities for lighter models

This is where observability meets optimization.

How Monobot Enables AI Observability

Monobot treats AI agents as production systems, not demos.

That means:

- structured logging of conversations

- visibility into resolution and escalation

- knowledge base interaction tracking

- support for QA workflows and reviews

- analytics across voice and chat

Instead of guessing what to improve, teams can see it.

From Observability to Continuous Improvement

The real value comes when observability feeds action.

A healthy loop looks like this:

- Monitor agent behavior

- Identify failing intents or KB gaps

- Update knowledge, flows, or routing

- Validate improvement

- Repeat weekly

AI agents improve fastest when treated like a living product, not a one-time setup.

Why This Matters for Production Teams

AI agents don’t fail loudly.

They fail quietly.

Observability is what turns:

- AI from a black box

- into a controllable system

If you’re running AI in production — especially voice agents — visibility is not optional.

It’s infrastructure.

Final Thought

Better models help.

Better prompts help.

But visibility is what keeps AI working long-term.

That’s why Monobot focuses not only on building AI agents — but on making them observable, testable, and continuously improvable in real-world environments.